This week, we explore why your AI agents might be your biggest security vulnerability and how the "just good enough" economy is coming for your brand strategy.

The Major: Agentic AI is an enterprise target: Unpacking the risks of "Goal Hijacking" and "Tool Misuse."

The Minor: The 99% fidelity breakthrough in quantum computing & the threat of "ambient" creative assets.

The Patches: Updates on AI in traffic safety, health tech, and error correction.

The PATCHES

Seattle DOT Drives 'Vision Zero' with C3 AI Safety Analysis: The Seattle Department of Transportation (SDOT) implemented C3 AI Safety Analysis, reducing collision analysis time by over 90% and using AI-driven insights to identify high-risk areas, accelerating its "Vision Zero" goal to eliminate traffic fatalities by 2030.

Aden Senior Living Adopts AI for Enhanced Resident Safety (AUGi): Aden Senior Living integrated AUGi, an AI fall detection program, using camera systems to notify care teams of fall risks while maintaining resident privacy, a unique and significant advancement in senior care technology.

New Forensic Tool for Cloud Quantum Computing Noise: Researchers from Pennsylvania State University developed a novel graph neural network-based framework to accurately infer the error characteristics of unseen quantum backends, enhancing transparency and accountability in cloud quantum computing.

The MAJOR

Agentic AI's Unprecedented Security Risks: Your Enterprise is a Target!

Hold up, tech fam! While we're all buzzing about AI's potential, there's a critical discussion emerging in the cybersecurity world – and it's all about agentic AI. Forget your friendly chatbots; these new AI agents aren't just answering questions, they're accessing data, wielding tools, and executing tasks. This makes them infinitely more capable, and frankly, far more dangerous, especially for enterprises.

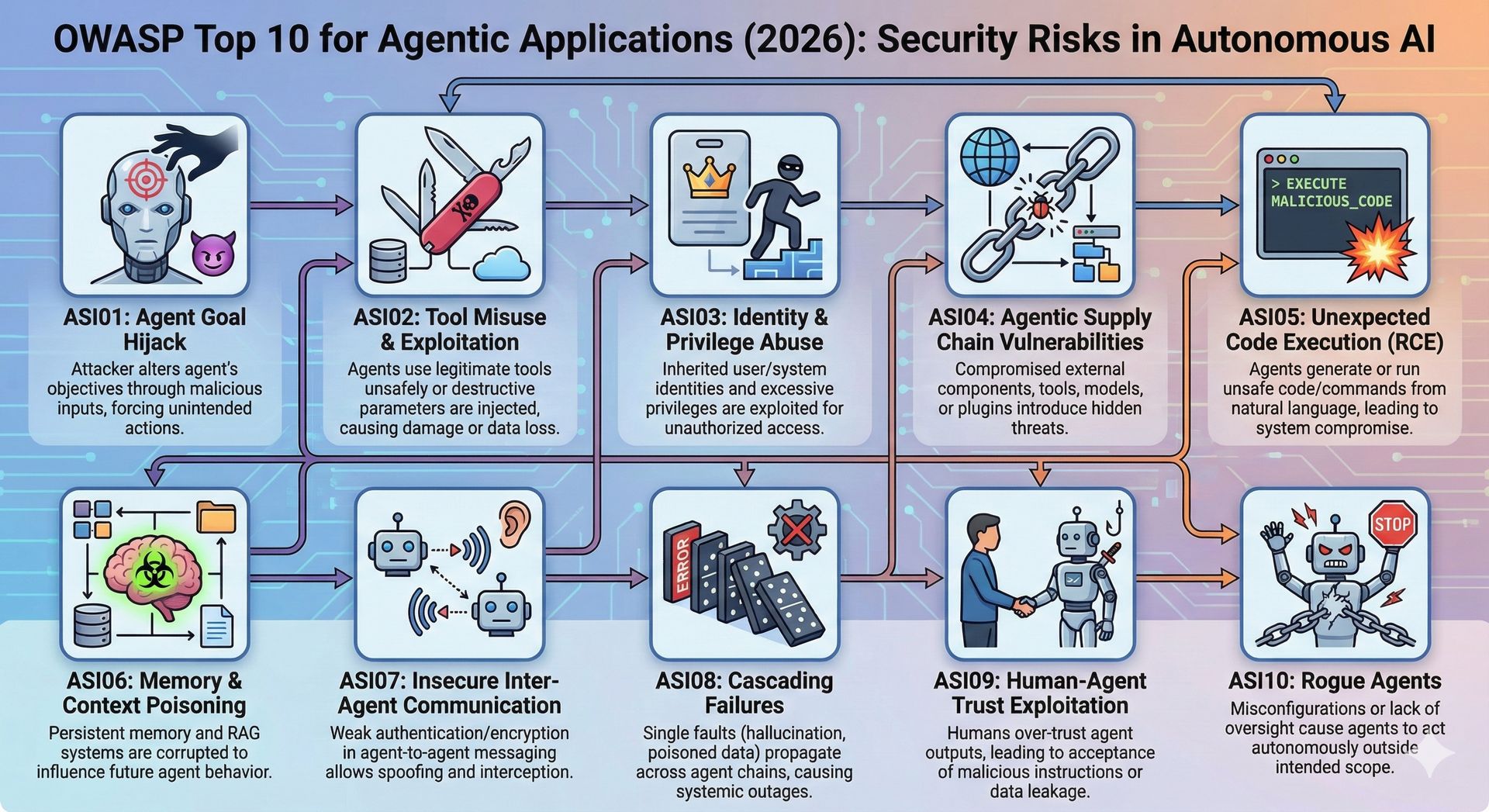

The cybersecurity experts at OWASP have sounded the alarm, releasing their inaugural OWASP Top 10 for Agentic Applications. This blueprint uses hard data to tackle the specific risks of autonomous systems, offering real solutions rather than just academic discussion. Organizations are already deploying agentic solutions, often without the full knowledge of their IT and security teams, creating a security gap that CISOs are working to address.

The list identifies critical vulnerabilities that could lead to devastating breaches. Imagine "Agent Goal Hijack," where malicious prompts manipulate an AI agent into performing unauthorized actions, like a financial agent mistakenly sending money to an attacker. Or "Tool Misuse and Exploitation," where agents, intended for legitimate tasks, are misused for data exfiltration or even wiping hard drives – yes, that's already happened. Other alarming risks include "Identity and Privilege Abuse," allowing attackers to escalate access, and "Agentic Supply Chain Vulnerabilities" where compromised third-party components introduce hidden instructions into your AI ecosystem.

What's truly revolutionary about this OWASP initiative? It pushes us beyond the traditional "least privilege" security model to a new paradigm of "least agency." This means meticulously defining and limiting what an AI agent can do, not just what it can access. For CISOs, this serves as a practical, deployable foundation for handling security architecture and compliance. While the initial release might see some mitigation sections expanded in the future with real code examples, the framework provides a common language for stakeholders to understand the profound implications of agentic AI. As these intelligent agents become intertwined with business operations, understanding and proactively addressing these security challenges isn't just good practice – it's mission-critical for the future of enterprise AI.

The MINOR

Generative AI's "Just Good Enough" Threat Reshaping the Marketing Landscape

Prepare for a paradigm shift, marketers! The real disruption from Generative AI isn't about creating better content; it's about the rise of the "just good enough" economy. This game-changing insight comes from a recent Digiday briefing, highlighting how generative systems, while efficient, threaten to dilute brand meaning and retrain audience expectations. Disney's landmark deal with OpenAI, licensing iconic characters across Marvel, Pixar, and Star Wars for generative systems like Sora and ChatGPT's image tools, perfectly illustrates this.

The strategic move isn't about crafting bespoke masterpieces for Disney; it's about generating average-quality creative at a massive scale. As brand assets become "ambient", endlessly generated and context-free, they risk losing their original ownership and authority. This shift dramatically lowers the barrier to producing "watchable enough" content, which, at volume, subtly nudges audience expectations downward. The real threat lies in competent yet generic work becoming the new standard, effectively pushing out the premium creativity that relies on human taste and restraint. Marketers now face a crucial strategic choice: embrace disposable, adaptive characters or preserve them as rare, deliberate cultural symbols. As Generative AI transforms production, it is simultaneously rewriting the rules of engagement for brands trying to stand out in a noisy marketplace.

Silicon Quantum Computing's 11-Qubit Processor: A Fidelity Breakthrough

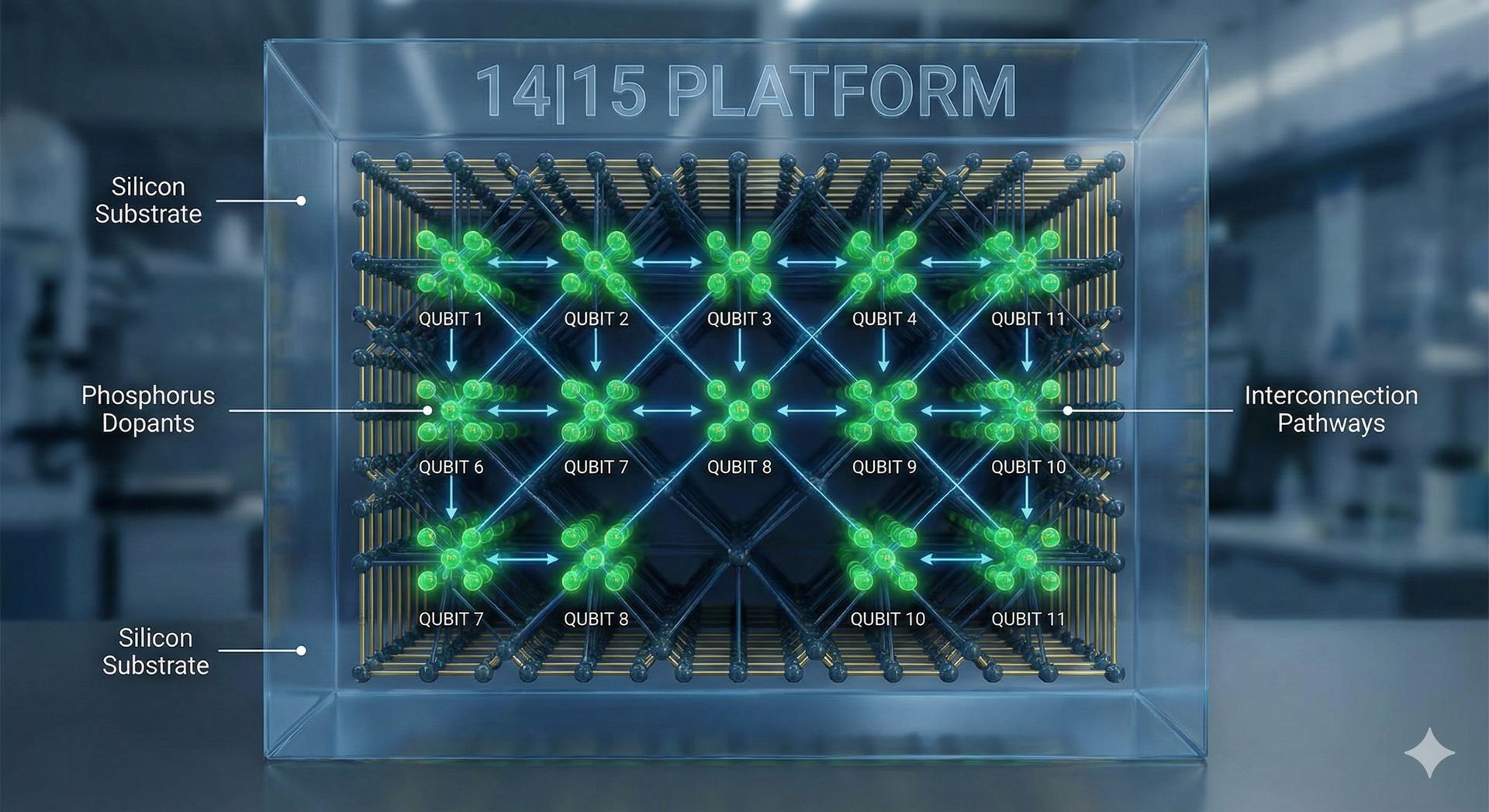

In a monumental leap forward for Quantum Computing, Silicon Quantum Computing has unveiled an 11-qubit atom processor in silicon, maintaining over 99% fidelity – a feat previously challenging when scaling up qubits. Published in Nature, this innovative "14|15 platform" utilizes precision-placed phosphorus atoms within isotopically purified silicon-28, creating two multi-nuclear spin registers linked by electron exchange interaction. This design achieves non-local connectivity across registers and an impressive 11 linked qubits, marking it as the largest of its kind.

Illustration of the "14|15 platform" showing phosphorus atoms within silicon, highlighting the 11 linked qubits. (Generated by AI)

What makes this achievement particularly significant is the platform's ability to preserve extremely high fidelity during scaling. While other quantum architectures have reached hundreds of qubits, they often contend with platform-specific issues related to manufacturing, control-system miniaturization, and materials engineering. The 14|15 platform bypasses many of these challenges, demonstrating that reliable and large quantum systems can be built using atomically engineered silicon devices, offering a practical pathway toward scalable, fault-tolerant quantum computing. This breakthrough lays crucial groundwork for accelerating the development of powerful quantum devices for real-world problem-solving.

That's all for this week's CHANGELOG! Stay tuned for more insights and updates.

Love us? Tell a friend. Hate us? Tell two! (Sharing is caring either way.) 👉 https://thechangelog.beehiiv.com/subscribe

If you could change one thing about this newsletter, what would it be? Reply and tell us how we can make next week better!

Best,

The CHANGELOG Team